When one thinks of the term crowdsourcing, practices related to business, marketing, and/or consumerism first come to mind. In academia, the idea of crowdsourcing seems most relevant to science disciplines or statistics. However, over the past few years the idea of crowdsourcing has been co-opted by the digital humanities. In the digital humanities, the practice of crowdsourcing involves primary sources and an open call to the general public with an invitation to participate.

There are pros and cons to crowdsourcing DH-related projects. Certainly having the benefit of many people working on a common project that serves a greater good is a pro. In turn, the project gains more attention because of the traffic generated by people who feel invested and share the site with others. On the other hand, with many people participating there is more room for error and inconsistency. Another con is the supervision and site maintenance needed to answer contributor queries, correct errors, and manage a project that is constantly changing with new transcriptions and uploads.

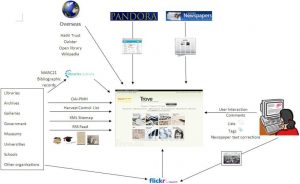

The four projects analyzed for this module reflect a range of likely contributors, interfaces, and community building. For example, Trove, which crowdsources annotations and corrections to scanned newspaper text in the collections of the National Library of Australia, has around 75,000 users who have produced nearly 100 million lines of corrected text since 2008 (Source: Digital Humanities Network, University of Cambridge). Trove’s interface is user-friendly but the organization and number of sources are overwhelming.

A second project, the Papers of the War Department (PWD), uses MediaWiki and Scripto (open-source transcription tool), which work well and present a very finished and organized interface. PWD has over 45,000 documents and promotes the project as “a unique opportunity to capitalize on the energy and enthusiasm of users to improve the archive for everyone.” The PWD also calls its volunteers “Transcription Associates” which gives weight and credibility for their hard work.

Building Inspector is like a citywide scavenger hunt/game, and its interface is clean, clearly explains, engaging, and barrier to contribute are very minimal. In fact, it is designed for use on mobile devices and tablets. As stated on the project site: “[Once] information is organized and searchable [with the public’s help], we can ask new kinds of questions about history. It will allow our interfaces to drop pins accurately on digital maps when you search for a forgotten place. It will allow you to explore a city’s past on foot with your mobile device, ‘checking in’ to ghostly establishments. And it will allow us to link other historical documents to those places: archival records, old newspapers, business directories, photographs, restaurant menus, theater playbills etc., opening up new ways to research, learn, and discover the past.” Building Inspector has approximately 20 professionals on its staff connected either directly to the project or NYPL Labs.

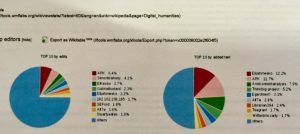

Finally, Transcribe Bentham uses MediaWiki. It is sponsored by the University of London and funded by the European Commission Horizon 2020 Programme for Research and Innovation. It was previously funded by the Andrew W. Mellon Foundation and the Arts and Humanities Research Council. They also ask volunteers to encode their transcripts in Text Encoding Initiative (TEI)-compliant XML; TEI is a de-facto standard for encoding electronic texts. It requires a bit more tech savvy, and its audience is likely smaller—fans, students, or enthusiasts of Jeremy Bentham and his writings. As a contributor, I worried about “getting it wrong,” especially with such important primary texts. Due to the sources’ handwriting, alternative spellings, unfamiliar vocabulary, and an older, more formal version of English made this a daunting task for me. An additional benefit of this project is the ability of contributors to create tags. In sum, Transcribe Bentham has 35,366 articles and 72,017 pages in total. There have been 193,098 edits so far, and the site is 45% complete. There are 37,183 registered users including 8 administrators.

As noted by digital humanists on HIST680 video summaries, the bulk of the work is actually done by a small group of highly committed volunteers who see their designated project as a job. Another group that regularly contributes is composed of undergraduate and graduate students working within a project like Transcribe Bentham as a part of their coursework. A final group of volunteers are those who are willing to share their specialized knowledge with these research, museum, literary, or cultural heritage projects.

Crowdsourcing is an amazing tool that can be used to create a sense of community as well as to create a large body of digitized, accessible text. I think one major factor to remember when considering successful crowdsourcing DH projects is the sheer scope of the work from several standpoints: informational, tech infrastructure, institutional, managerial, public value, and funding. Successful crowdsourcing methods applied to DH-related digitization and transcription projects requires a dedicated, knowledgeable, well-funded, interdisciplinary team based within an established institution, whether that be an educational institution or government agency. In other words, it is an enormous (and enormously admirable and useful) undertaking. But for now, I will simply have to admire academic crowdsourcing as an advocate and user.